Rule of Three's Foray into Artificial Intelligence. . .

. . .because actual intelligence wasn't really working out.

There’s been a lot of chatter lately about artificial intelligence (AI), and its potentially dire implications upon society. Forbes.com published an article more than two years ago (thanks a lot, Rule of Three Research team, for once again surfacing the most relevant and timely research data available for our use - lazy bastards!), entitled, “14 Ways AI Could Become a Detriment to Society” - not as catchy as Paul Simon’s “50 Ways to Leave Your Lover,” and certainly not as danceable, but, an interesting read nonetheless. Herewith is a summary of those 14 ways (you’ll likely note, as we did, that not a single one of them includes rhymes, such as “plan & Stan,” or “coy & Roy,” or “bus & Gus”):

1. AI could create a lack of privacy.

2. AI lacks transparency and is biased.

3. AI may hinder societal advancement.

4. AI can lead to unfair outcomes.

5. AI can limit our willingness to make difficult choices.

6. AI’s wrong predictions can lead to life-threatening events.

7. AI threatens jobs and security.

8. Cybercriminals can take advantage of AI for social engineering scams.

9. AI could become too complex.

10. AI lacks emotional intelligence.

11. Viewing AI as ‘human’ will disrupt adoption.

12. AI can prevent ‘out of the box’ thinking.

13. AI causes complacency.

14. AI poses a threat to personal safety.

Hmm. . .sounds pretty dangerous, doesn’t it? Well, danger is our middle name. Actually, that’s not true - “of” is our middle name. Regardless, we intend to step into the AI dealership, and kick the tires.

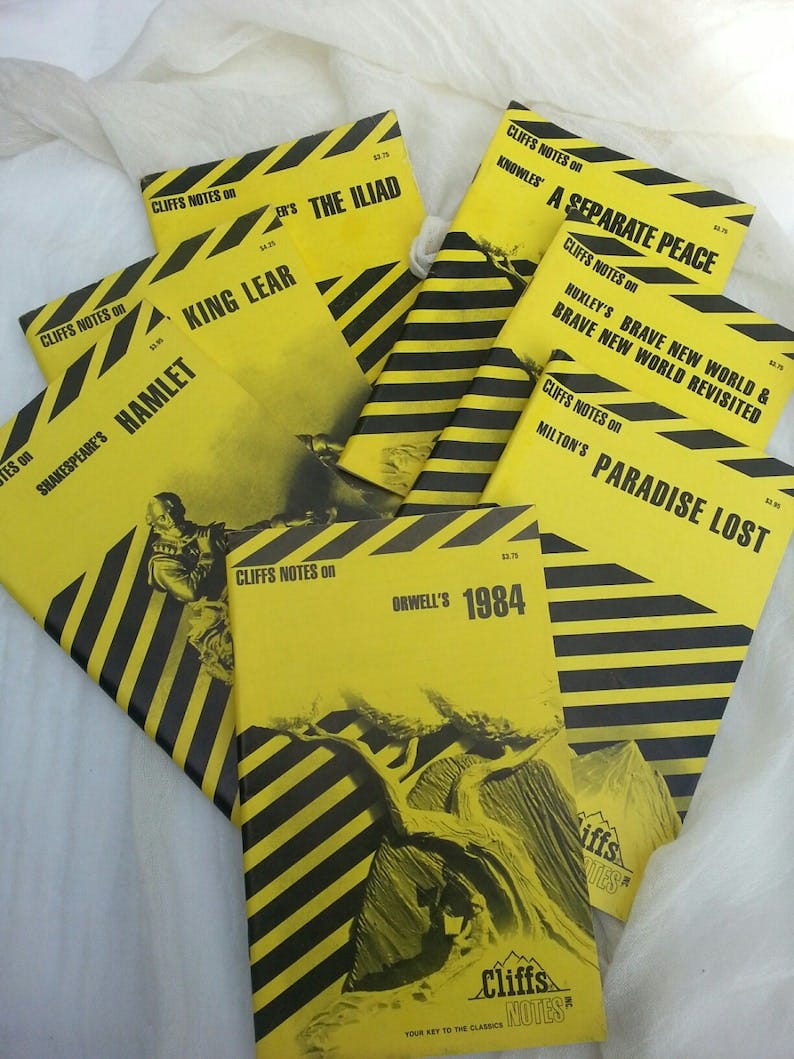

The threats identified by forbes.com do not include other causes for consternation (or, hand-wringing - I couldn’t make up my mind - take your pick) which some fear AI will spark: AI representing the new-age version of CliffsNotes - remember those from your school days?. . .

. . .or, shortcuts for journalists, or, egad! substitutes for award-winning humor columns.

In the interest of responding to that last concern, we chose to road-test a product called, “ChatGPT,” developed by an organization known as “OpenAI,” which, according to their website, “. . .is an AI research and deployment company. Our mission is to ensure that artificial general intelligence benefits all of humanity.” Not one word about posing a threat to personal safety, or any of the other thirteen potential negative outcomes highlighted by Forbes.com. Of course, if memory serves, in that classic The Twilight Zone episode, entitled, “To Serve Man,” the aliens visiting Earth declare that they intend to provide humanitarian aid, by enabling inexpensive, boundless electricity, ending famine, and preventing international warfare. The title of their book is translated as, “To Serve Man,” and Earth’s leaders are impressed by their visitors’ good intentions. It’s only at the end of the episode, after many humans have volunteered to travel to the aliens’ home planet, that the rest of the book is translated, revealing that the book is, in fact, a cookbook. Boy, I sure hope there’s not a similar plot twist awaiting users of ChatGPT.

Rule of Three’s Subscription Acquisition department suggests that we sprinkle calls to action within the body of each weekly column, so, if you’ve enjoyed this piece thus far, why not subscribe right now? After all, it’s free!In order to put ChatGPT through its paces, we developed the following request:

“Please craft a 1,000-word humor column on porpoises, utilizing the inimitable Rule of Three writing style.”

ChatGPT unfurled this response, complete within minutes:

“Porpoises. The forgotten cousin of the dolphin. They're like the Jan Brady to the dolphin's Marcia. But don't underestimate these little guys, they have a personality all their own. And today, we're going to dive into the world of porpoises with the help of the Rule of Three writing style.

First up, did you know that porpoises are actually pretty smart? They have a larger brain-to-body ratio than most animals, which means they're probably thinking some pretty deep thoughts. But what could a porpoise possibly be pondering? Maybe they're wondering why they always get mistaken for dolphins. Or maybe they're thinking about how they can up their game and become the cool kids of the ocean.

Secondly, porpoises are known for their unique communication style. They use a series of clicks, whistles, and squeaks to communicate with each other. It's like they have their own secret language. And who knows, maybe they're gossiping about the dolphins behind their backs. "Did you see Flipper's new dorsal fin? So last year."

Finally, let's talk about porpoise behavior. They're known for their playful antics, often riding waves and jumping out of the water. But did you know that porpoises are also skilled hunters? They use echolocation to locate their prey and then quickly dart in for the kill. It's like a game of underwater tag, but with a much higher stakes.

In conclusion, porpoises may not have the same level of fame as their dolphin cousins, but they have plenty of unique traits that make them stand out. They're smart, they have their own language, and they're playful yet fierce hunters. So the next time you're out on the water and you see a porpoise, give them the respect they deserve. And who knows, maybe they'll teach you a thing or two about living life to the fullest.”

So, what do you think? Does this piece meet the stringent standards to which you, a loyal Rule of Three reader, have become accustomed? I know what you’re thinking: Rule of Three has set the bar impossibly high, and there’s no way anyone, human or otherwise, could ever hope to meet that standard. Oh, that’s not what you’re thinking? You were instead wondering why you ended up reading some inane piece on porpoises, when you could be watching Seinfeld reruns? Well, as you might imagine, we here in the Content Quality Control department at Rule of Three have our own feedback to provide:

Firstly, ChatGPT’s piece checks-in at a mere 307 words, when the specifications were very clear, in seeking a 1,000-word essay. I thought math was one of AI’s strong points - I have to say, we’re a little disappointed in the lack of effort demonstrated in this exercise.

We’re also unimpressed with the tired, old student essay-writer’s trick of parroting the question in the essay itself, in order to achieve the desired word-count - e.g. “And today, we're going to dive into the world of porpoises with the help of the Rule of Three writing style. Many years ago, we probably began an essay on “The Primary Causes of the Peloponnesian War” with “There were many causes of the Peloponnesian War, but the primary causes of the Peloponnesian War included. . .,” believing that including that sentence would save us the labor of at least one sentence later in the essay. And, lest we forget, ChatGPT still came up woefully short of the required word-count.

We do admire ChatGPT lacing-in a relevant pop-culture reference (relevant, if it’s 1972, that is): The Brady Bunch. And, Flipper’s new dorsal fin bit - priceless!

But, we would argue that its attempt, in the second paragraph, to highlight what porpoises might be thinking, would perhaps be an appropriate excerpt from a column featured in Rule of Two, and not Rule of Three - you’re one short, my friend.

Further, I’m not sure why I began my request with the word “please;” I suppose my mother’s constant exhortations to “be nice,” and “be polite,” and “say ‘please’ and ‘thank you’” continue to echo in my head, even though I know, intuitively, that ChatGPT is not a human person - perhaps that kindness, whether sincere, or faked, contributed to ChatGPT’s willingness “To Serve Man” - perhaps not.

All in all, a decent first effort. And, as I understand AI, repetition is expected to breed familiarity, which means that, in about twenty-five years or so, you wouldn’t be able to tell the difference between an award-winning Rule of Three column, and an AI-generated Rule of Three column.

That's right, here's another one - I'm sorry, it wasn't my idea. But, just click the friggin' button, and no one gets hurt - it’s free!Before we bid farewell to ChatGPT, there is one additional task we asked it to perform for us:

“Please indicate the 14 ways in which AI could become a detriment to society. Also, what is the most effective method of disciplining a professional research staff which has proven to be quite lazy?”

ChatGPT again provided a lightning-fast response, as follows:

Job loss: AI and automation can displace workers, leading to job loss and economic insecurity.

Bias: AI systems can replicate and even amplify existing biases and discrimination present in society.

Privacy: AI systems can collect and analyze vast amounts of personal data, which can lead to privacy violations.

Security: AI systems can be vulnerable to cyberattacks, which can have serious consequences.

Dependence: Over-reliance on AI can make society vulnerable to system failures and breakdowns.

Autonomy: Autonomous AI systems can make decisions without human oversight, which can lead to unintended consequences.

Ethics: AI systems can raise ethical questions, such as the responsibility for actions taken by autonomous systems.

Transparency: AI systems can be opaque and difficult to understand, making it hard to audit and detect problems.

Accountability: It can be challenging to hold AI systems accountable for their actions and outcomes.

Economic inequality: AI can exacerbate economic inequality by concentrating wealth and power in the hands of a few.

Social isolation: AI and automation can lead to social isolation and disconnection, reducing social cohesion.

Psychological impact: AI can have psychological impacts on people, including anxiety, stress, and loneliness.

Human value: AI can devalue human skills and attributes, leading to a loss of purpose and identity.

Existential risk: AI could pose an existential threat to humanity if it becomes too powerful or is used for destructive purposes.

As for the second part of your question, disciplining a professional research staff that has proven to be quite lazy can be challenging. Here are some potential approaches:

Set clear expectations: Ensure that everyone on the team knows what is expected of them and has clear goals and deadlines to meet.

Hold team members accountable: If someone fails to meet expectations, hold them accountable for their performance.

Offer incentives: Provide rewards or recognition for good work and high performance.

Provide feedback: Regular feedback can help team members understand how they can improve their performance.

Invest in training and development: If the team lacks the necessary skills or knowledge, invest in training and development opportunities to help them improve.

Address underlying issues: If laziness is a symptom of deeper issues, such as burnout or lack of motivation, address those issues directly.

Consider disciplinary action: As a last resort, disciplinary action, such as a warning or termination, may be necessary if other measures fail to improve performance.

Wow! Talk about your self-awareness. ChatGPT should have no problem crafting its New Years’ resolutions, and this list doesn’t include one reference to losing weight, quitting smoking, or saving money; there is that pesky “destroying mankind” thing, but, hey! nobody’s perfect, right?

And, as for the Rule of Three Research team, I’m inclined to ignore ChatGPT’s first six suggestions, and jump right to no. seven - c’mon man, step up your game!